TECHNO LEGAL

FRAMEWORK.

The Office of the Principal Scientific Adviser to the Government of India has articulated a paradigm shifting approach to AI governance: embedding legal obligations directly into technical architecture. This is governance as intrinsic design feature, not regulatory afterthought.

AI is already reshaping our polity, our economy, our security and even our society. AI is writing the code for humanity in this century. During our G20 Presidency, we built a consensus on Harnessing AI Responsibly, for Good, and for All. Today, India leads in AI adoption, and techno legal solutions on data privacy.Hon'ble Prime Minister Narendra Modi

AI Action Summit, Paris, February 2025

EXECUTIVE SUMMARY

The rapid advancement of Artificial Intelligence creates opportunities for innovation but also poses governance challenges that conventional regulatory approaches are proving inadequate to address. Owing to AI's rapidly evolving, adaptive, and borderless nature, the traditional command and control model, where organisations must comply with formal rules set by competent authorities under penalty of law, requires supplementation with a more dynamic framework.

The Techno Legal Framework represents India's answer to this challenge. Rather than treating technology and law as separate domains operating in silos, this approach integrates legal instruments, rule based conditioning, regulatory oversight and technical enforcement mechanisms directly into the technical architecture of AI systems. The result is governance that is not merely a set of external constraints or post facto rules but an intrinsic feature of any AI system, adaptable to evolving risks and contexts.

The techno legal approach not only supports responsible innovation but also ensures that AI technologies, irrespective of whether they are developed domestically or sourced from abroad, are aligned with the technical, legal, and ethical norms of the country. It is transparent for accountability and IPR compliance, explainable in terms of performance and protection, provable with reference to technical safeguards, and enabling towards unlocking data and AI for innovation.

The PSA White Paper represents a fundamental shift in how India conceptualises technology regulation. By moving from post facto enforcement to design time embedding, the techno legal framework acknowledges that the pace of AI development has outstripped traditional legislative cycles. For practitioners, this means compliance must now be architected into systems from inception, not bolted on after deployment. The most significant implication is that legal and technical teams can no longer operate in silos. Every AI project requires integrated governance from day one.

The Constitutional

Foundation

Constitutional Imperative

To meet the fundamental rights of citizens enshrined in the Constitution to live with privacy, security, safety, access to fair information and earn for their work in the digital and AI era. The techno legal framework operationalises these constitutional guarantees in the context of artificial intelligence.

Safe and Trusted AI

To ensure that AI systems are trained, developed, deployed, and used in a way that protects citizens' privacy, security, safety, and ensures fair treatment. These are the primary attributes of Safe and Trusted AI that the framework seeks to guarantee across the entire lifecycle.

Technical Safeguards

Through technical safeguards and governance mechanisms that together ascertain transparency, accountability, explainability, provability, and the enabling nature of AI systems. The framework specifies both technical and non technical controls at each lifecycle stage.

Four Governance Priorities

The techno legal approach strengthens India's AI governance framework through four strategic dimensions

Scale & Consistency

Enforcement through standardised, automated checks that can operate at population scale without compromising consistency.

Measurable Accountability

Via logs, attestations, and audit trails that create verifiable records of compliance and enable post facto analysis.

Inclusive Compliance

Low cost compliance leveraging Digital Public Infrastructure to support smaller firms and public agencies alongside large enterprises.

Future Readiness

Allowing rapid calibration in response to evolving risks, model updates, and emerging AI capabilities without requiring legislative amendment.

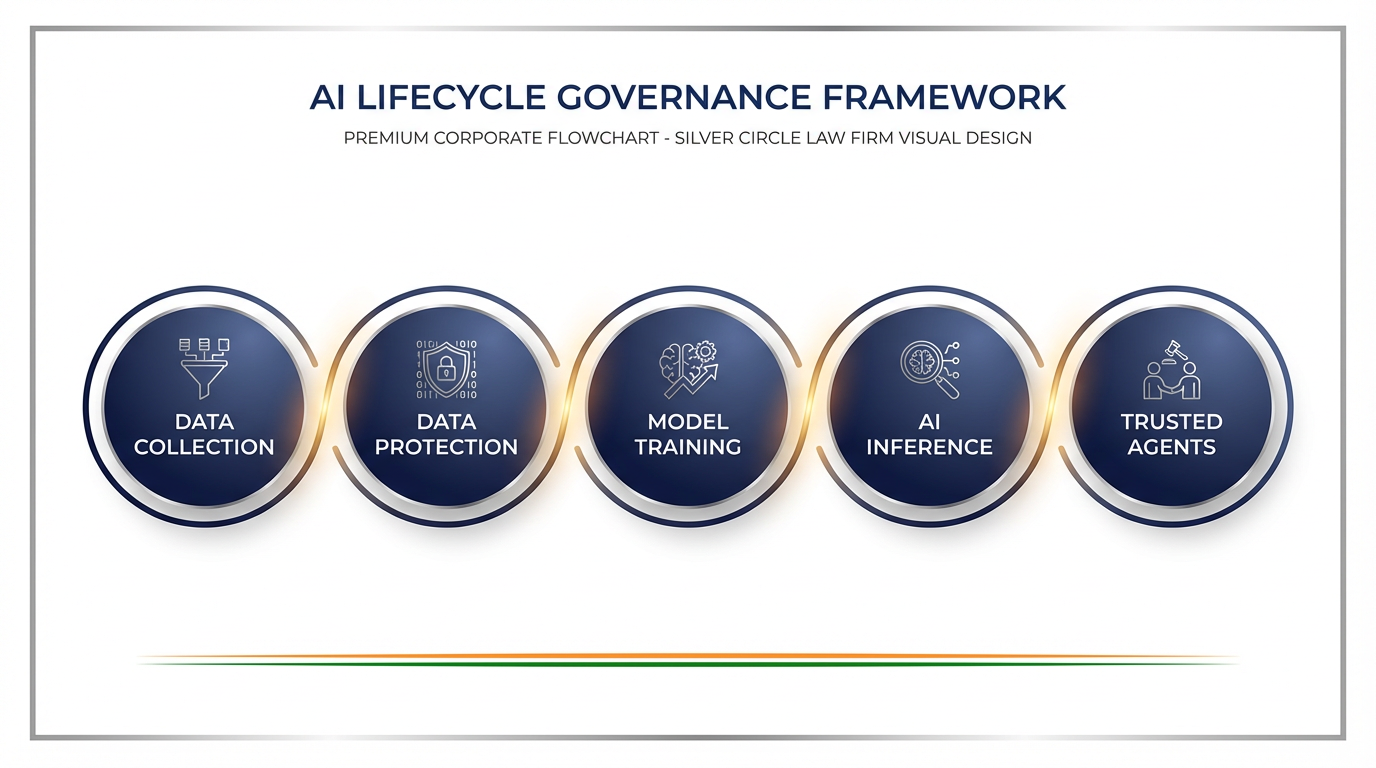

The Five Lifecycle

Stages

PSA White Paper: Safe and Trusted AI Across the AI Lifecycle

Data Collection

Conception stage covering all types of data, extending beyond personal data. Establishes foundational governance for the entire AI value chain.

Data in Use Protection

Technical and non technical measures including consent based access control, privacy enhancing technologies, and data anonymisation techniques.

AI Training & Model Assessment

Governance of training processes including data poisoning detection, bias evaluation, and model documentation through standardised model cards.

Safe AI Inference

Responsible AI implementation including LLM guardrails, output filtering, and monitoring for discriminative and generative AI models.

Trusted Agents

Governance of agentic AI systems with enhanced requirements for autonomy, decision making authority, and human oversight mechanisms.

Institutional

Architecture

AI Governance Group (AIGG)

The apex coordinating body responsible for providing guidance on AI governance, hearing grievances, and pronouncing decisions on complaints. AIGG ensures coordination, consistency, and continuous learning across the ecosystem.

- •Policy coordination across ministries

- •Grievance redressal mechanism

- •Standard setting recommendations

Technology & Policy Expert Committee (TPEC)

Provides technical expertise for standard setting and framework development. TPEC bridges the gap between regulatory intent and technical implementation through domain specific guidance.

- •Technical standards development

- •Framework operationalisation

- •Sector specific guidance

AI Safety Institute (AISI)

India's dedicated institution for AI safety research, evaluation of high risk systems, and development of safety frameworks and toolkits. AISI coordinates with global safety institutes and standards setting bodies.

- •Safety tools research and development

- •High risk system evaluation

- •International safety coordination

National AI Incident Database

A national repository to record, classify, and analyse AI related risks and incidents including safety failures, biased outcomes, security breaches, and misuse. Enables evidence based refinement of controls.

- •India specific risk taxonomy

- •Systemic trend detection

- •Data driven audit support

What strikes me most about the framework is its recognition that India's AI governance cannot simply mirror Western templates. The emphasis on Digital Public Infrastructure integration, the acknowledgement of linguistic and demographic diversity in fairness assessments, and the explicit attention to Global South realities demonstrate a contextually grounded approach. The proposed AI Safety Institute and National AI Incident Database create the institutional infrastructure that has been missing. This is not regulation for regulation's sake but governance designed to enable innovation while protecting constitutional values.

Technical

Safeguards

The framework specifies technical controls that embed legal obligations directly at design and development stages, allowing system controls to support compliance on an ongoing basis.

Consent Based Access Control

Technical mechanisms ensuring data processing aligns with consent parameters

Privacy Enhancing Technologies

Differential privacy, federated learning, and secure multi party computation

Data Poisoning Detection

Automated detection of training data manipulation and adversarial inputs

AI Threat Modelling

Systematic identification of AI specific security vulnerabilities and risks

Impact Assessment Tools

Algorithmic impact assessments and fairness evaluation frameworks

LLM & Agentic Guardrails

Output filtering, content moderation, and autonomous action constraints

Cross Border

Considerations

AI models are developed and deployed across multiple jurisdictions, legal standards, and enforcement mechanisms. A model trained or governed under one country's framework may not incorporate the safeguards required by another. For India, this means that even with a strong domestic framework, many globally accessed AI systems may not embed required protections.

The framework identifies core features significant at global level where meaningful convergence is feasible: privacy, security, safety, reliability, explainability, transparency, accountability, and inclusivity. Alignment is supported by developing techno legal tools to operationalise these features alongside parallel standard setting.

The AI Safety Institute, through its network of global safety institutes, can inform AIGG by identifying alignment between national AI governance frameworks and emerging international norms.

India AI Governance: Bridging Domestic and International Frameworks

India's Unique Approach

How the techno legal framework differs from conventional regulatory approaches

| Dimension | Framework Approach |

|---|---|

| Objective | Empowers innovation with governance and technical controls across the AI lifecycle, driving Responsible AI by design in a techno legal way. This is law plus, including voluntary frameworks. |

| Approach | Solution oriented techno legal approach to developing, deploying, and using AI across its lifecycle while mitigating risks and providing clarity for the AI ecosystem. |

| Key Difference | Provides a path to unlock data and AI with right governance and technical controls to accelerate innovation. Preventing risks with controls across the lifecycle is prioritised and incentivised. |

| Incentivisation | Larger deployments touching more citizens or associated with higher perceived risks should have advanced levels of governance, transparency, and technical controls. Scale of perceived risk determines compliance intensity. |

| Outcome | Balances innovation with risk mitigation using technical control and governance. Responsible AI by Design at population scale can result in global trust and adoption. |

Strategic Implications

The PSA White Paper on Techno Legal Framework represents a maturation of India's approach to AI governance. Rather than choosing between innovation and safety, the framework provides mechanisms to achieve both. By embedding legal requirements into technical architecture, compliance becomes a design feature rather than a regulatory burden.

For organisations operating in India, the implications are significant. AI systems must be designed from inception with governance controls built in. Technical teams must work alongside legal counsel throughout the development lifecycle. Documentation practices must support audit and accountability requirements.

The framework also signals India's intent to shape global AI governance norms rather than simply adopt them. By developing contextually appropriate mechanisms grounded in Digital Public Infrastructure experience, India positions itself as a laboratory for inclusive AI governance that can serve as template for other developing economies.

AMLEGALS AI Policy Hub • Strategic Intelligence